You’ve designed your system, and you’ve deployed your SaaS application. Now what? This is where your focus shifts to optimization and tuning. Even a fully optimized SaaS application needs adjustment and continuous tuning as workload patterns change.

There are also important operational insights you will find that can include unexpected costs, surprising performance issues, feature requests, and just general unexpected outages and risks that have to be dealt with. In this post, we’ll look at how to optimize performance and costs of your SaaS application and review what future trends in SaaS you should be keeping an eye on.

Monitoring and Analytics for SaaS Applications

Every layer of your application should be monitored continuously. What you choose to monitor for is important and will also be influenced by current tools you already use. As you move towards more proactive and advanced monitoring (e.g. observability) you will define your application KPIs and the metrics and analytics needed to track those KPIs.

Performance Optimization for SaaS Applications

Cloud storage performance issues can occur for database platforms due to several technical reasons. Here are some common factors that contribute to performance issues in cloud storage for database platforms:

Network Latency

Cloud storage involves accessing data over a network, and network latency can impact database performance. Network latency refers to the delay in data transmission between the database server and the cloud storage. High network latency can lead to slower read and write operations, resulting in decreased database performance, and in turn a degraded application experience for end users.

Disk I/O Bottlenecks

Cloud storage relies on underlying storage infrastructure, such as disks or object storage systems. Disk I/O bottlenecks occur when the storage system cannot keep up with the rate of read and write requests from the database. Insufficient I/O throughput or high latency in the storage system can limit the performance of the database, causing delays in data retrieval and updates.

This leads to application slowness or potential failures as storage processes fail to keep up with the application demand. These slowdowns can happen during high application usage or during other online processes that are competing for access to the database (e.g. batch processing, backups, snapshotting, and online computation).

Storage Configuration

Inadequate storage configuration can impact database performance. Incorrectly configured storage settings, such as suboptimal block sizes, cache settings, or data placement policies, can negatively affect performance. It is essential to align the storage configuration with the specific requirements of the database workload to optimize performance.

The effect of misconfiguration is amplified as usage increases and you will find that the user experience of your SaaS application varies wildly depending on many configuration options and combinations. This is why your design needs to be adaptive to respond to unexpected performance that only shows up during production use.

Data Distribution and Partitioning

When using distributed databases or sharding techniques, data distribution and partitioning can impact performance. Uneven distribution of data across nodes or improper partitioning strategies can lead to data imbalances, increased network traffic, and suboptimal query execution plans, resulting in degraded performance.

This is one of the most challenging architectural decisions because of how profound the effect can be on user experience and performance as the application scales and usage changes over time. Even an optimized design at one point in time may become inefficient as application usage changes and features evolve.

Concurrency and Locking

Database platforms handle concurrent access to data by multiple users or processes. In a cloud storage environment, the coordination of concurrent access and the management of locks can impact performance. Inefficient locking mechanisms or high contention for resources can lead to performance degradation and delays in query execution.

Cloud Provider Performance

The performance of the cloud storage service itself can influence database performance. Factors such as the quality of underlying hardware, service-level agreements (SLAs), and the provider’s network infrastructure can impact the overall performance and availability of the storage service, consequently affecting the database performance.

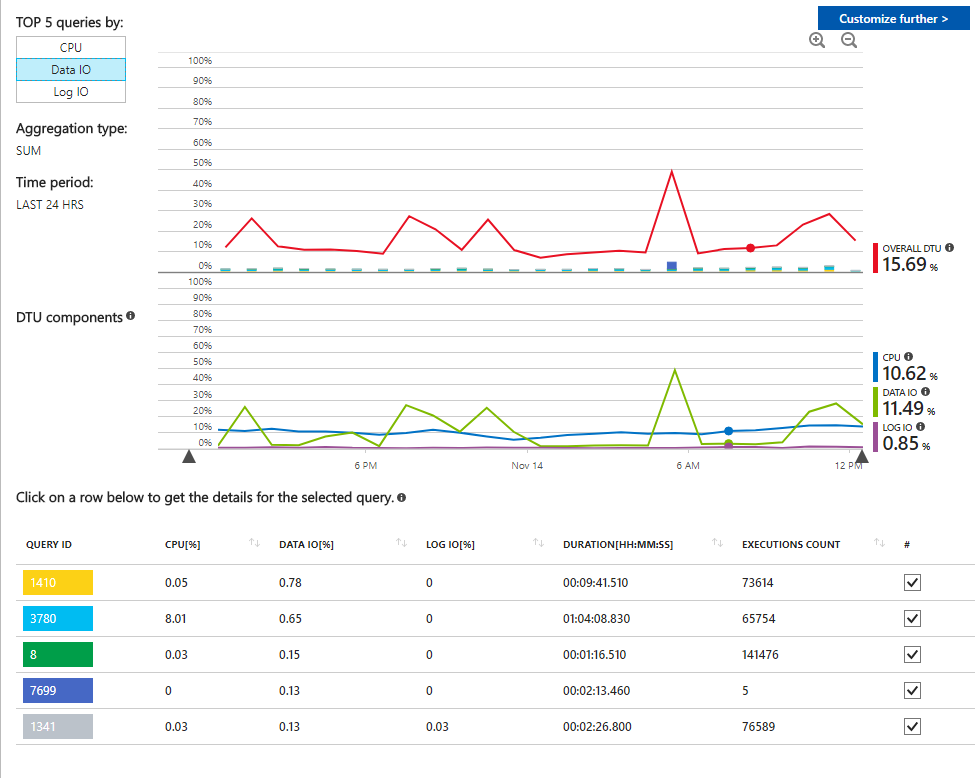

To address and mitigate these performance issues, it is important to monitor and analyze the storage performance metrics, optimize the storage configuration for the specific database workload, and implement proper data distribution and partitioning strategies.

Regular performance testing, tuning, and benchmarking can help identify and resolve bottlenecks and inefficiencies.

Collaboration with the cloud storage provider and leveraging their expertise can also assist in optimizing performance and resolving any underlying issues specific to their storage infrastructure.

Cost Management and Optimization for SaaS Applications

The price-performance ratio is about price AND performance. These are continuous trade-offs that need to be made and also requires continuous awareness of changes to the cloud providers’ features because those changes can have significant impact on both price and performance.

Scaling Considerations for SaaS Applications

Capacity vs Performance vs Cost Tradeoffs

Your application and database performance are tightly tied to physical components, including capacity. Scaling VM disks for database workloads involves increasing the storage capacity or performance of the disks attached to the VM (e.g. increase capacity by upgrading the Azure VM to a higher-performance size). While this can provide additional resources to handle increased data storage or processing demands, it may also introduce performance implications.

For example, you might choose to upgrade from a smaller VM size like Standard D2s v4 (2 vCPUs, 8 GB RAM) to a larger VM sizelike E4ds v5 (4 vCPUs, 32 GB RAM). These are just two of the many options for VM families supporting Azure SQL that vary by CPU type, scale limits, and also network and storage throughput.

You’re likely wondering how to make the right decision on scaling your Azure VM disks for databases. When scaling Azure VM disks for database workloads, there are many features, risks, and limitations to consider.

Performance Impact

It’s crucial to carefully assess the performance characteristics and limitations of the underlying disk infrastructure, such as the IOPS (Input/Output Operations Per Second) and throughput capabilities. Inadequate scaling or improper disk configuration can result in decreased performance, leading to slower response times and degraded database performance.

Downtime and Data Loss

Scaling VM disks for database workloads often involves performing operations like disk resizing, disk striping, or migrating to different disk types. These operations can require downtime or impact the availability of the database. If not executed correctly, there is a risk of data loss or corruption during the scaling process.

It is essential to follow best practices, perform thorough backups, and carefully plan and execute the disk scaling operations to minimize the risk of downtime and data loss.

Cost Considerations

Scaling Azure VM disks for database workloads may come with additional costs. Increasing the storage capacity or performance of disks can result in higher monthly charges for disk usage.

It’s important to consider the cost implications and optimize the disk scaling strategy based on the specific needs of the database workload. Careful monitoring of disk usage and performance can help identify opportunities for optimization and cost savings.

To mitigate these risks, organizations should adopt the following best practices:

- Thoroughly assess the performance characteristics and limitations of the underlying disk infrastructure before scaling.

- Plan and test disk scaling operations carefully, following proper backup and recovery procedures to minimize downtime and data loss risks.

- Monitor the performance of the database workload after scaling and fine-tune configurations if necessary.

Continuously monitor and optimize disk usage to ensure cost-effectiveness. By understanding these risks and implementing appropriate mitigation strategies, organizations can effectively scale Azure VM disks for their database workloads while minimizing potential issues and ensuring optimal performance and availability.

What about a Cloud Provider DBaaS?

Moving towards scalable database options (e.g. Azure SQL, Google Cloud Databases, AWS RDS) can reduce some of the toil and effort for IT operations, but there are still trade-offs.

- Customization Limitations – DBaaS offerings often provide a predefined set of features and configurations. If your application has specific requirements or relies on advanced database functionality that is not available in the DBaaS offering, it can limit your ability to customize and tailor the database to your needs.

- Vendor Lock-In – Adopting a DBaaS solution may lead to vendor lock-in, as migrating the database to a different provider or on-premises infrastructure can be complex and time-consuming. If you anticipate the need for flexibility or want to avoid dependency on a single provider, a DBaaS solution might not be the best fit.

- Performance Trade-offs – While DBaaS offerings provide convenience and ease of management, they may introduce performance trade-offs. Shared resources, limited control over underlying infrastructure, or inability to fine-tune database configurations to specific requirements can impact performance, especially for demanding or latency-sensitive workloads.

- Lack of Cost and Utilization Transparency – Proprietary cloud DBaaS can provide ease-of-use but lacks in some sharing of true performance and cost metrics. You may find that you need to suddenly scale up or down and the built-in solutions do not always have reversible ways to do so.

- Limited to cloud-based DBaaS – On-premises offerings from cloud providers are not available which requires you to use a validated partner solution for hybrid database services or to run multiple databases.

Future Trends in SaaS Applications

You have likely seen trends in what’s been discussed here and with what your team is looking at for future projects. The top three areas of interest which are driving innovation for SaaS applications include ML and AI, serverless computing innovation, and the use of data for edge computing.

Artificial Intelligence (AI) and Machine Learning (ML) Integration

AI and ML technologies are increasingly being integrated into SaaS applications to deliver enhanced functionality and intelligence. SaaS platforms leverage AI algorithms to automate tasks, provide personalized recommendations, improve data analysis and insights, and enable predictive capabilities.

Future SaaS applications will likely leverage AI and ML to automate complex decision-making processes, enable natural language processing and understanding, and offer advanced analytics and predictive modeling capabilities.

Wide availability of GPU and optimized cloud hardware configurations is making high performance computing more accessible. This will change a lot of how your team designs and manages your SaaS environment.

Serverless Computing

Serverless computing is gaining traction in the SaaS application landscape. With serverless architectures, developers can focus on writing code without worrying about infrastructure management. Future SaaS applications will likely leverage some serverless computing to achieve greater scalability, cost-efficiency, and rapid development cycles. Serverless architectures enable automatic scaling, pay-as-you-go pricing models, and event-driven processing, allowing SaaS applications to be more flexible and agile.

Serverless data options are available today but still have many limitations and risks for high-performance applications. The other risk to serverless technologies is that they are proprietary to the cloud provider and may not extend to hybrid environments.

The distinct advantage to serverless is the idea of “scale to zero” which can reduce your costs. The trade-off for this is usually in complexity of application design and operations. You also may not have many parts of your application that truly scale to zero so containerization and microservices architectures have remained the most widely used methods for scaling.

Edge Computing and Data Services

Edge computing is a distributed computing pattern that brings computation and data storage closer to the location where it’s needed. This is to improve response times and save bandwidth. In the context of data-centric applications, edge computing refers to the ability to process and analyze data at the edge of the network, near the source of the data. This can be done by deploying smaller-scale, localized data centers, edge devices, or even end-user devices like IoT devices.

An example technical use case for using edge computing for data processing is a smart manufacturing facility. The facility may be equipped with numerous sensors and devices that generate a massive amount of data in real-time. Instead of transmitting all this data to a centralized cloud or data center for processing, edge computing brings data processing closer to the source, minimizing latency and bandwidth requirements.

By deploying edge computing nodes at the manufacturing site, data from sensors and devices can be processed locally in near real-time. This enables the facility to perform real-time analytics, monitoring, and quality control without relying heavily on cloud connectivity. For example, the edge nodes can process sensor data to detect anomalies or predict equipment failures, enabling proactive maintenance and reducing downtime. Critical data insights can be obtained at the edge, allowing for quick decision- making and optimizing operational efficiency.

Edge computing is becoming increasingly popular for local ML, AI, and other computational processes by reducing processing time. Database operations stay closer to the client and without having to send data back and forth which can drastically increase latency.

These three use-cases are just a few of the many innovations happening that will create many new opportunities for SaaS applications to become even more valuable.

Ready to Optimize Your SaaS Application?

Download our white paper, “Building and Optimizing High-Performance SaaS Applications” for your free guide!

Let's Get Optimizing!